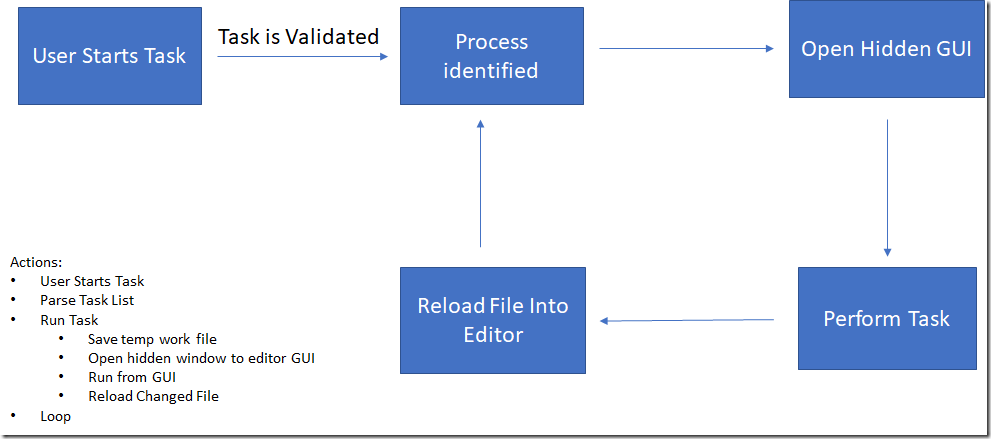

One of the components getting a significant overhaul in MarcEdit 7 is how the application processes tasks. This work started in MarcEdit 6.3.x, when I introduced a new —experimental bit when processing tasks from the command-line. This bit shifted task processing from within the MarcEdit application to directly against the libraries where the underlying functions for each task was run. The process was marked as experimental, in part, because task process have always been tied to the MarcEdit GUI. Essentially, this is how a task works in MarcEdit:

Essentially, when running a task, MarcEdit opens and closes the corresponding edit windows and processes the entire file, on each edit. So, if there are 30 steps in a task, the program will read the entire file, 30 times. This is wildly inefficient, but also represents the easiest way that tasks could be added into MarcEdit 6 based on the limitations within the current structure of the program.

In the console program, I started to experiment with accessing the underlying libraries directly — but still, maintained the structure where each task item represented a new pass through the program. So, while the UI components were no longer being interacted with (improving performance), the program was still doing a lot of file reading and writing.

In MarcEdit 7, I re-architected how the application interacts with the underlying editing libraries, and as part of that, included the ability to process tasks at that more abstract level. The benefit of this, is that now all tasks on a record can be completed in one pass. So, using the example of a 30 item task — rather than needing to open and close a file 30 times, the process now opens the file once and then processes all defined task operations on the record. The tool can do this, because all task processing has been pulled out of the MarcEdit application, and pushed into a task broker. This new library accepts from MarcEdit the file to process, and the defined task (and associated tasks), and then facilitates task processing at a record, rather than file, level. I then modified the underlying library functions, which actually was really straightforward given how streams work in .NET.

Within MarcEdit, all data is generally read and written using the StreamReader/StreamWriter classes, unless I specifically have need to access data at the binary level. In those cases, I’d use a MemoryStream. The benefit of using the StreamReader/Writer classes, however, is that it is an instance of the abstract TextReader class. .NET also has a StringReader class, that allows C# to read strings like a stream — it too is an instance of the TextReader class. This means that I’ve been able to make the following changes to the functions, and re-use all the existing code while still providing processing at both a file and a record level:

string function(string sSource, string sDest, bool isFile=true) {

StringBuilder output = new StringBuilder(sDest);

System.IO.TextReader reader = null;

System.IO.TextWriter writer = null;

if (isFile) {

reader = new System.IO.StreamReader(sSource);

writer = new System.IO.StreamWriter(output.ToString(), false);

} else {

output.Clear();

reader = new System.IO.StringReader(sSource);

writer = new System.IO.StringWriter(output);

}

//…Do Stuff

return output.ToString()

}

As a TextReader/TextWriter, I now have access to the necessary functions needed to process both data streams like a file. This means that I can now handle file or record level processing using the same code — as long as both data sources are in the mnemonic format. Pretty cool.

What does this mean for users? It means that in MarcEdit 7, tasks will be supercharged. In testing, I’m seeing tasks that use to take 1, 2, or 3 minutes to complete now run in a matter of seconds. So, while there are a lot of really interesting changes planned for MarcEdit 7, this enhancement feels like the one that might have the biggest impact for users as it will represent significant time savings when you consider processing time over the course of a month or year.

Questions, let me know.

–tr